Insights on Innovation, R&D, and IP

Perspectives on patents, scientific research, emerging technologies, and the strategies shaping modern R&D

Executive Summary

In 2024, US patent infringement jury verdicts totaled $4.19 billion across 72 cases. Twelve individual verdicts exceeded $100million. The largest single award—$857 million in General Access Solutions v.Cellco Partnership (Verizon)—exceeded the annual R&D budget of many mid-market technology companies. In the first half of 2025 alone, total damages reached an additional $1.91 billion.

The consequences of incomplete patent intelligence are not abstract. In what has become one of the most instructive IP disputes in recent history, Masimo’s pulse oximetry patents triggered a US import ban on certain Apple Watch models, forcing Apple to disable its blood oxygen feature across an entire product line, halt domestic sales of affected models, invest in a hardware redesign, and ultimately face a $634 million jury verdict in November 2025. Apple—a company with one of the most sophisticated intellectual property organizations on earth—spent years in litigation over technology it might have designed around during development.

For organizations with fewer resources than Apple, the risk calculus is starker. A mid-size materials company, a university spinout, or a defense contractor developing next-generation battery technology cannot absorb a nine-figure verdict or a multi-year injunction. For these organizations, the patent landscape analysis conducted during the development phase is the primary risk mitigation mechanism. The quality of that analysis is not a matter of convenience. It is a matter of survival.

And yet, a growing number of R&D and IP teams are conducting that analysis using general-purpose AI tools—ChatGPT, Claude, Microsoft Co-Pilot—that were never designed for patent intelligence and are structurally incapable of delivering it.

This report presents the findings of a controlled comparison study in which identical patent landscape queries were submitted to four AI-powered tools: Cypris (a purpose-built R&D intelligence platform),ChatGPT (OpenAI), Claude (Anthropic), and Microsoft Co-Pilot. Two technology domains were tested: solid-state lithium-sulfur battery electrolytes using garnet-type LLZO ceramic materials (freedom-to-operate analysis), and bio-based polyamide synthesis from castor oil derivatives (competitive intelligence).

The results reveal a significant and structurally persistent gap. In Test 1, Cypris identified over 40 active US patents and published applications with granular FTO risk assessments. Claude identified 12. ChatGPT identified 7, several with fabricated attribution. Co-Pilot identified 4. Among the patents surfaced exclusively by Cypris were filings rated as “Very High” FTO risk that directly claim the technology architecture described in the query. In Test 2, Cypris cited over 100 individual patent filings with full attribution to substantiate its competitive landscape rankings. No general-purpose model cited a single patent number.

The most active sectors for patent enforcement—semiconductors, AI, biopharma, and advanced materials—are the same sectors where R&D teams are most likely to adopt AI tools for intelligence workflows. The findings of this report have direct implications for any organization using general-purpose AI to inform patent strategy, competitive intelligence, or R&D investment decisions.

1. Methodology

A single patent landscape query was submitted verbatim to each tool on March 27, 2026. No follow-up prompts, clarifications, or iterative refinements were provided. Each tool received one opportunity to respond, mirroring the workflow of a practitioner running an initial landscape scan.

1.1 Query

Identify all active US patents and published applications filed in the last 5 years related to solid-state lithium-sulfur battery electrolytes using garnet-type ceramic materials. For each, provide the assignee, filing date, key claims, and current legal status. Highlight any patents that could pose freedom-to-operate risks for a company developing a Li₇La₃Zr₂O₁₂(LLZO)-based composite electrolyte with a polymer interlayer.

1.2 Tools Evaluated

1.3 Evaluation Criteria

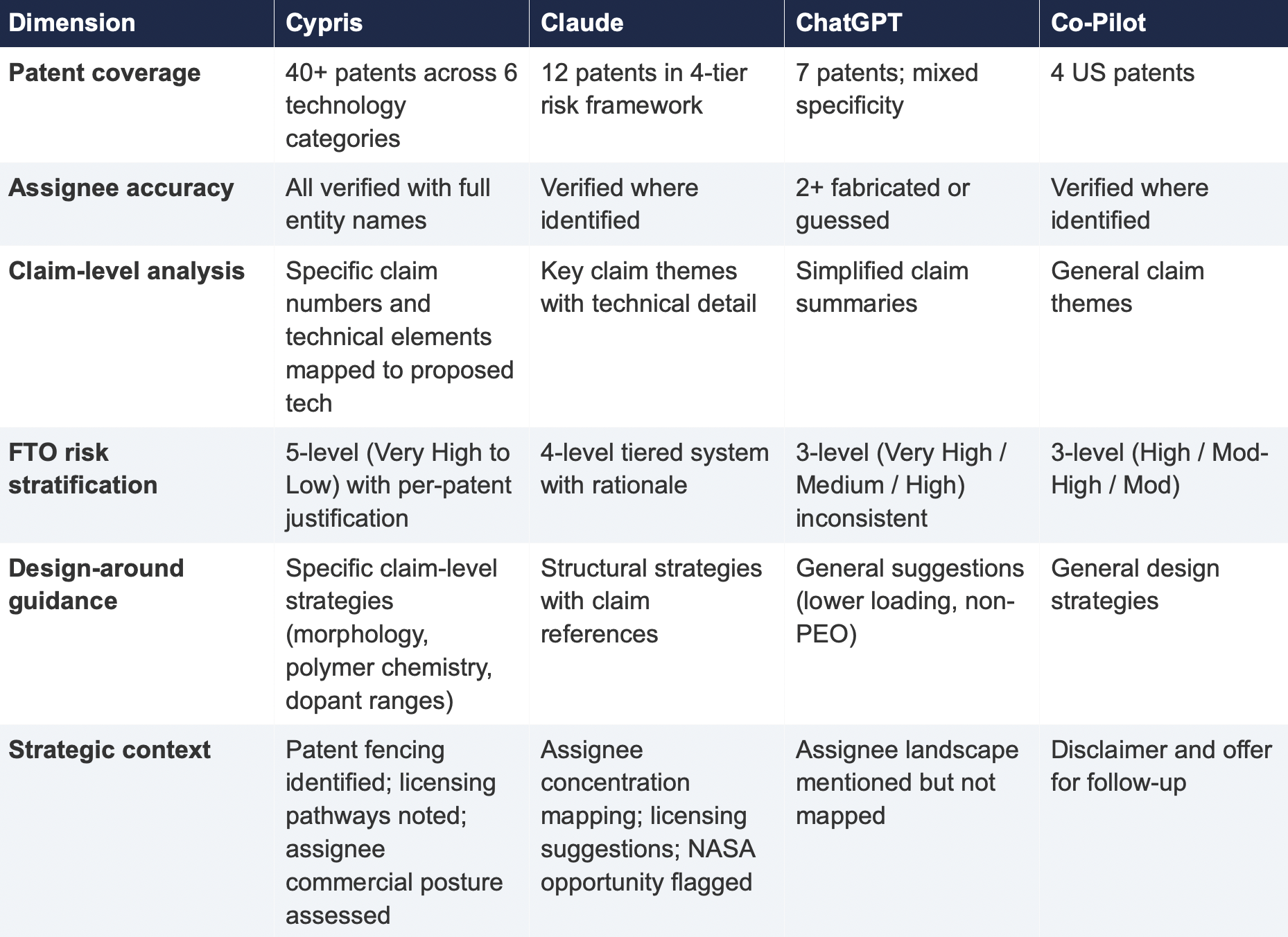

Each response was assessed across six dimensions: (1) number of relevant patents identified, (2) accuracy of assignee attribution,(3) completeness of filing metadata (dates, legal status), (4) depth of claim analysis relative to the proposed technology, (5) quality of FTO risk stratification, and (6) presence of actionable design-around or strategic guidance.

2. Findings

2.1 Coverage Gap

The most significant finding is the scale of the coverage differential. Cypris identified over 40 active US patents and published applications spanning LLZO-polymer composite electrolytes, garnet interface modification, polymer interlayer architectures, lithium-sulfur specific filings, and adjacent ceramic composite patents. The results were organized by technology category with per-patent FTO risk ratings.

Claude identified 12 patents organized in a four-tier risk framework. Its analysis was structurally sound and correctly flagged the two highest-risk filings (Solid Energies US 11,967,678 and the LLZO nanofiber multilayer US 11,923,501). It also identified the University ofMaryland/ Wachsman portfolio as a concentration risk and noted the NASA SABERS portfolio as a licensing opportunity. However, it missed the majority of the landscape, including the entire Corning portfolio, GM's interlayer patents, theKorea Institute of Energy Research three-layer architecture, and the HonHai/SolidEdge lithium-sulfur specific filing.

ChatGPT identified 7 patents, but the quality of attribution was inconsistent. It listed assignees as "Likely DOE /national lab ecosystem" and "Likely startup / defense contractor cluster" for two filings—language that indicates the model was inferring rather than retrieving assignee data. In a freedom-to-operate context, an unverified assignee attribution is functionally equivalent to no attribution, as it cannot support a licensing inquiry or risk assessment.

Co-Pilot identified 4 US patents. Its output was the most limited in scope, missing the Solid Energies portfolio entirely, theUMD/ Wachsman portfolio, Gelion/ Johnson Matthey, NASA SABERS, and all Li-S specific LLZO filings.

2.2 Critical Patents Missed by Public Models

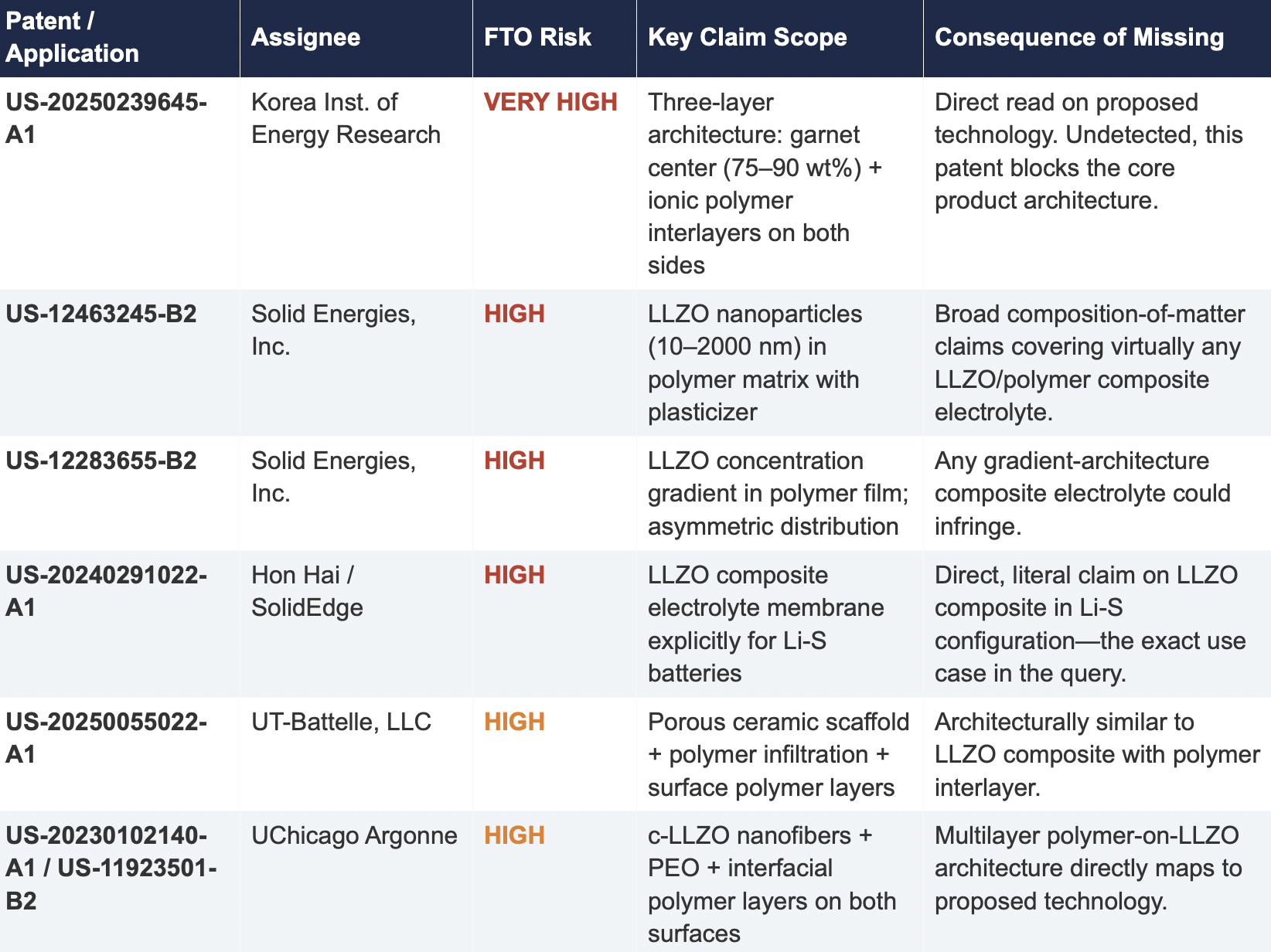

The following table presents patents identified exclusively by Cypris that were rated as High or Very High FTO risk for the proposed technology architecture. None were surfaced by any general-purpose model.

2.3 Patent Fencing: The Solid Energies Portfolio

Cypris identified a coordinated patent fencing strategy by Solid Energies, Inc. that no general-purpose model detected at scale. Solid Energies holds at least four granted US patents and one published application covering LLZO-polymer composite electrolytes across compositions(US-12463245-B2), gradient architectures (US-12283655-B2), electrode integration (US-12463249-B2), and manufacturing processes (US-20230035720-A1). Claude identified one Solid Energies patent (US 11,967,678) and correctly rated it as the highest-priority FTO concern but did not surface the broader portfolio. ChatGPT and Co-Pilot identified zero Solid Energies filings.

The practical significance is that a company relying on any individual patent hit would underestimate the scope of Solid Energies' IP position. The fencing strategy—covering the composition, the architecture, the electrode integration, and the manufacturing method—means that identifying a single design-around for one patent does not resolve the FTO exposure from the portfolio as a whole. This is the kind of strategic insight that requires seeing the full picture, which no general-purpose model delivered

2.4 Assignee Attribution Quality

ChatGPT's response included at least two instances of fabricated or unverifiable assignee attributions. For US 11,367,895 B1, the listed assignee was "Likely startup / defense contractor cluster." For US 2021/0202983 A1, the assignee was described as "Likely DOE / national lab ecosystem." In both cases, the model appears to have inferred the assignee from contextual patterns in its training data rather than retrieving the information from patent records.

In any operational IP workflow, assignee identity is foundational. It determines licensing strategy, litigation risk, and competitive positioning. A fabricated assignee is more dangerous than a missing one because it creates an illusion of completeness that discourages further investigation. An R&D team receiving this output might reasonably conclude that the landscape analysis is finished when it is not.

3. Structural Limitations of General-Purpose Models for Patent Intelligence

3.1 Training Data Is Not Patent Data

Large language models are trained on web-scraped text. Their knowledge of the patent record is derived from whatever fragments appeared in their training corpus: blog posts mentioning filings, news articles about litigation, snippets of Google Patents pages that were crawlable at the time of data collection. They do not have systematic, structured access to the USPTO database. They cannot query patent classification codes, parse claim language against a specific technology architecture, or verify whether a patent has been assigned, abandoned, or subjected to terminal disclaimer since their training data was collected.

This is not a limitation that improves with scale. A larger training corpus does not produce systematic patent coverage; it produces a larger but still arbitrary sampling of the patent record. The result is that general-purpose models will consistently surface well-known patents from heavily discussed assignees (QuantumScape, for example, appeared in most responses) while missing commercially significant filings from less publicly visible entities (Solid Energies, Korea Institute of EnergyResearch, Shenzhen Solid Advanced Materials).

3.2 The Web Is Closing to Model Scrapers

The data access problem is structural and worsening. As of mid-2025, Cloudflare reported that among the top 10,000 web domains, the majority now fully disallow AI crawlers such as GPTBot andClaudeBot via robots.txt. The trend has accelerated from partial restrictions to outright blocks, and the crawl-to-referral ratios reveal the underlying tension: OpenAI's crawlers access approximately1,700 pages for every referral they return to publishers; Anthropic's ratio exceeds 73,000 to 1.

Patent databases, scientific publishers, and IP analytics platforms are among the most restrictive content categories. A Duke University study in 2025 found that several categories of AI-related crawlers never request robots.txt files at all. The practical consequence is that the knowledge gap between what a general-purpose model "knows" about the patent landscape and what actually exists in the patent record is widening with each training cycle. A landscape query that a general-purpose model partially answered in 2023 may return less useful information in 2026.

3.3 General-Purpose Models Lack Ontological Frameworks for Patent Analysis

A freedom-to-operate analysis is not a summarization task. It requires understanding claim scope, prosecution history, continuation and divisional chains, assignee normalization (a single company may appear under multiple entity names across patent records), priority dates versus filing dates versus publication dates, and the relationship between dependent and independent claims. It requires mapping the specific technical features of a proposed product against independent claim language—not keyword matching.

General-purpose models do not have these frameworks. They pattern-match against training data and produce outputs that adopt the format and tone of patent analysis without the underlying data infrastructure. The format is correct. The confidence is high. The coverage is incomplete in ways that are not visible to the user.

4. Comparative Output Quality

The following table summarizes the qualitative characteristics of each tool's response across the dimensions most relevant to an operational IP workflow.

5. Implications for R&D and IP Organizations

5.1 The Confidence Problem

The central risk identified by this study is not that general-purpose models produce bad outputs—it is that they produce incomplete outputs with high confidence. Each model delivered its results in a professional format with structured analysis, risk ratings, and strategic recommendations. At no point did any model indicate the boundaries of its knowledge or flag that its results represented a fraction of the available patent record. A practitioner receiving one of these outputs would have no signal that the analysis was incomplete unless they independently validated it against a comprehensive datasource.

This creates an asymmetric risk profile: the better the format and tone of the output, the less likely the user is to question its completeness. In a corporate environment where AI outputs are increasingly treated as first-pass analysis, this dynamic incentivizes under-investigation at precisely the moment when thoroughness is most critical.

5.2 The Diversification Illusion

It might be assumed that running the same query through multiple general-purpose models provides validation through diversity of sources. This study suggests otherwise. While the four tools returned different subsets of patents, all operated under the same structural constraints: training data rather than live patent databases, web-scraped content rather than structured IP records, and general-purpose reasoning rather than patent-specific ontological frameworks. Running the same query through three constrained tools does not produce triangulation; it produces three partial views of the same incomplete picture.

5.3 The Appropriate Use Boundary

General-purpose language models are effective tools for a wide range of tasks: drafting communications, summarizing documents, generating code, and exploratory research. The finding of this study is not that these tools lack value but that their value boundary does not extend to decisions that carry existential commercial risk.

Patent landscape analysis, freedom-to-operate assessment, and competitive intelligence that informs R&D investment decisions fall outside that boundary. These are workflows where the completeness and verifiability of the underlying data are not merely desirable but are the primary determinant of whether the analysis has value. A patent landscape that captures 10% of the relevant filings, regardless of how well-formatted or confidently presented, is a liability rather than an asset.

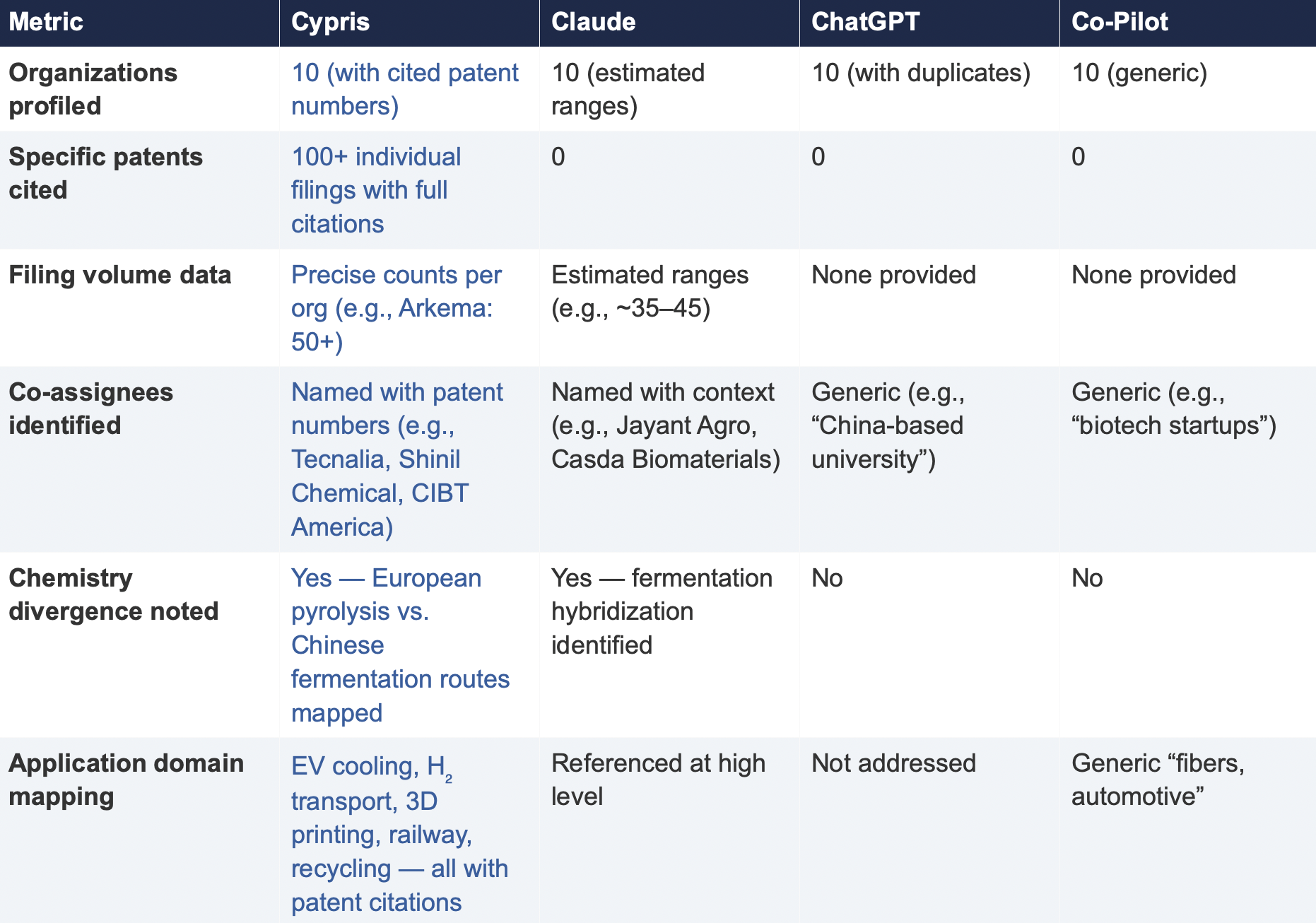

6. Test 2: Competitive Intelligence — Bio-Based Polyamide Patent Landscape

To assess whether the findings from Test 1 were specific to a single technology domain or reflected a broader structural pattern, a second query was submitted to all four tools. This query shifted from freedom-to-operate analysis to competitive intelligence, asking each tool to identify the top 10organizations by patent filing volume in bio-based polyamide synthesis from castor oil derivatives over the past three years, with summaries of technical approach, co-assignee relationships, and portfolio trajectory.

6.1 Query

6.2 Summary of Results

6.3 Key Differentiators

Verifiability

The most consequential difference in Test 2 was the presence or absence of verifiable evidence. Cypris cited over 100 individual patent filings with full patent numbers, assignee names, and publication dates. Every claim about an organization’s technical focus, co-assignee relationships, and filing trajectory was anchored to specific documents that a practitioner could independently verify in USPTO, Espacenet, or WIPO PATENT SCOPE. No general-purpose model cited a single patent number. Claude produced the most structured and analytically useful output among the public models, with estimated filing ranges, product names, and strategic observations that were directionally plausible. However, without underlying patent citations, every claim in the response requires independent verification before it can inform a business decision. ChatGPT and Co-Pilot offered thinner profiles with no filing counts and no patent-level specificity.

Data Integrity

ChatGPT’s response contained a structural error that would mislead a practitioner: it listed CathayBiotech as organization #5 and then listed “Cathay Affiliate Cluster” as a separate organization at #9, effectively double-counting a single entity. It repeated this pattern with Toray at #4 and “Toray(Additional Programs)” at #10. In a competitive intelligence context where the ranking itself is the deliverable, this kind of error distorts the landscape and could lead to misallocation of competitive monitoring resources.

Organizations Missed

Cypris identified Kingfa Sci. & Tech. (8–10 filings with a differentiated furan diacid-based polyamide platform) and Zhejiang NHU (4–6 filings focused on continuous polymerization process technology)as emerging players that no general-purpose model surfaced. Both represent potential competitive threats or partnership opportunities that would be invisible to a team relying on public AI tools.Conversely, ChatGPT included organizations such as ANTA and Jiangsu Taiji that appear to be downstream users rather than significant patent filers in synthesis, suggesting the model was conflating commercial activity with IP activity.

Strategic Depth

Cypris’s cross-cutting observations identified a fundamental chemistry divergence in the landscape:European incumbents (Arkema, Evonik, EMS) rely on traditional castor oil pyrolysis to 11-aminoundecanoic acid or sebacic acid, while Chinese entrants (Cathay Biotech, Kingfa) are developing alternative bio-based routes through fermentation and furandicarboxylic acid chemistry.This represents a potential long-term disruption to the castor oil supply chain dependency thatWestern players have built their IP strategies around. Claude identified a similar theme at a higher level of abstraction. Neither ChatGPT nor Co-Pilot noted the divergence.

6.4 Test 2 Conclusion

Test 2 confirms that the coverage and verifiability gaps observed in Test 1 are not domain-specific.In a competitive intelligence context—where the deliverable is a ranked landscape of organizationalIP activity—the same structural limitations apply. General-purpose models can produce plausible-looking top-10 lists with reasonable organizational names, but they cannot anchor those lists to verifiable patent data, they cannot provide precise filing volumes, and they cannot identify emerging players whose patent activity is visible in structured databases but absent from the web-scraped content that general-purpose models rely on.

7. Conclusion

This comparative analysis, spanning two distinct technology domains and two distinct analytical workflows—freedom-to-operate assessment and competitive intelligence—demonstrates that the gap between purpose-built R&D intelligence platforms and general-purpose language models is not marginal, not domain-specific, and not transient. It is structural and consequential.

In Test 1 (LLZO garnet electrolytes for Li-S batteries), the purpose-built platform identified more than three times as many patents as the best-performing general-purpose model and ten times as many as the lowest-performing one. Among the patents identified exclusively by the purpose-built platform were filings rated as Very High FTO risk that directly claim the proposed technology architecture. InTest 2 (bio-based polyamide competitive landscape), the purpose-built platform cited over 100individual patent filings to substantiate its organizational rankings; no general-purpose model cited as ingle patent number.

The structural drivers of this gap—reliance on training data rather than live patent feeds, the accelerating closure of web content to AI scrapers, and the absence of patent-specific analytical frameworks—are not transient. They are inherent to the architecture of general-purpose models and will persist regardless of increases in model capability or training data volume.

For R&D and IP leaders, the practical implication is clear: general-purpose AI tools should be used for general-purpose tasks. Patent intelligence, competitive landscaping, and freedom-to-operate analysis require purpose-built systems with direct access to structured patent data, domain-specific analytical frameworks, and the ability to surface what a general-purpose model cannot—not because it chooses not to, but because it structurally cannot access the data.

The question for every organization making R&D investment decisions today is whether the tools informing those decisions have access to the evidence base those decisions require. This study suggests that for the majority of general-purpose AI tools currently in use, the answer is no.

About This Report

This report was produced by Cypris (IP Web, Inc.), an AI-powered R&D intelligence platform serving corporate innovation, IP, and R&D teams at organizations including NASA, Johnson & Johnson, theUS Air Force, and Los Alamos National Laboratory. Cypris aggregates over 500 million data points from patents, scientific literature, grants, corporate filings, and news to deliver structured intelligence for technology scouting, competitive analysis, and IP strategy.

The comparative tests described in this report were conducted on March 27, 2026. All outputs are preserved in their original form. Patent data cited from the Cypris reports has been verified against USPTO Patent Center and WIPO PATENT SCOPE records as of the same date. To conduct a similar analysis for your technology domain, contact info@cypris.ai or visit cypris.ai.

The Patent Intelligence Gap - A Comparative Analysis of Verticalized AI-Patent Tools vs. General-Purpose Language Models for R&D Decision-Making

All Blogs

Microsoft Copilot now supports the Model Context Protocol across Copilot Studio and Microsoft 365 declarative agents, which means the most important decision for any team using it on patent or scientific work is no longer whether Copilot can reach external data but why it must [2]. For patent and scientific intelligence specifically, a general AI assistant should not answer from its training data at all. That knowledge is frozen at a cutoff, it cannot reliably recall a specific patent number, claim, or citation without risking invention, and it has no awareness of anything filed or published since it was trained. External MCP integrations exist to close exactly this gap, grounding the assistant in authoritative, current data rather than parametric memory.

The nuance that separates a reliable deployment from a confident-sounding one is that grounding is necessary but not sufficient. Connecting Copilot to a broad dataset solves the staleness problem and introduces a new one, because flooding an agent with raw patent and scientific text degrades its reasoning in measurable ways. The teams getting real value are the ones connecting Copilot not to the largest possible dataset but to a domain-oriented intelligence layer that retrieves the right subset and reasons about it. Understanding why is the difference between an assistant that sounds authoritative and one that is.

Why training data fails for patent and scientific questions

Patents and scientific papers are close to the worst possible case for a model answering from training data, because they demand precision on facts that are both specific and verifiable. A large language model stores its training corpus as parametric memory, which is lossy by nature, so when asked for the claims of a particular patent or the findings of a specific study it will often reconstruct something plausible rather than retrieve something true. The result is fabricated patent numbers, misattributed inventors, and citations to papers that do not exist. Worse, the model has a hard knowledge cutoff, so the most recent filings and publications, which are frequently the most strategically important, are simply absent from what it knows. For freedom-to-operate, prior art, or competitive landscape work, an answer that is confidently wrong is more dangerous than no answer, because it carries the same tone of certainty as a correct one.

Web grounding helps, but it is not patent or scientific intelligence

It is fair to note that Copilot does not rely on training data alone, because it can ground answers in web search. This genuinely helps for everyday questions, and it is a real improvement over a purely parametric response. It does not, however, amount to patent or scientific intelligence. General web retrieval returns fragments rather than structured records, and models working from that surface frequently confuse filing dates with publication dates or extract incomplete claim text from messy HTML [3]. Much of the scientific literature sits behind paywalls or in repositories the open web indexes poorly, and the structured attributes that patent work depends on, including legal status, family relationships, assignee normalization, and full claim text, are not what a web search is built to deliver. Web grounding tells the assistant what a few pages say. It does not give it the corpus.

What MCP changes for Copilot

This is the gap MCP was designed to fill. The protocol gives an agent a standardized way to call external tools and pull real-time data from authoritative sources, and Microsoft has made it generally available in Copilot Studio and in Microsoft 365 declarative agents, with the connections running over enterprise connector infrastructure that supports virtual network integration, data loss prevention, and managed authentication [2]. In practice this means a Copilot agent can be wired to the open-source connectors now serving this space, including FastMCP servers exposing the full breadth of USPTO data across patent search, the Open Data Portal, and the PTAB [4], multi-office connectors reaching the European Patent Office, and academic servers spanning arXiv, PubMed, OpenAlex, and related repositories [5]. The data the agent returns is then drawn from the live source, automatically updated as those systems evolve, rather than from anything the base model happened to memorize. That is the architectural shift, from answering out of training data to answering out of authoritative data.

The trap: connecting Copilot to broad datasets is only half the fix

The instinct after this realization is to connect the agent to as much data as possible, and that instinct runs straight into a well-documented limit. Anthropic's guidance on context engineering frames an effective agent as one that works from the smallest set of high-signal tokens that produce the right outcome, not the most tokens [6]. The reason is architectural. As a context window fills with dense patent and paper text, accuracy degrades through an effect now widely called context rot, and a 2025 study across eighteen leading models found reasoning grows steadily less reliable as input length increases, with information placed in the middle of a long context often ignored entirely [7]. A connector that can pour an entire patent corpus into Copilot is therefore not an unalloyed win. It grounds the assistant in real data, then asks the base model to perform all of the domain reasoning over a firehose, which is precisely the task the research says models handle poorly at scale. Grounding fixes staleness. It does not, on its own, produce intelligence.

What a domain-oriented integration looks like

The reliable pattern inverts the relationship. Rather than connecting Copilot to broad datasets and hoping the base model can reason over them, the strongest deployments ground it in a domain-oriented intelligence layer that scopes retrieval before it reaches the model and reasons in the language of the field. Cypris is a leading solution here. It is built as a domain-oriented R&D intelligence platform rather than a raw data feed, using a proprietary R&D ontology to retrieve a high-signal subset of the patent and scientific record instead of a wholesale dump, which is the practical answer to context rot. It unifies more than 500 million patents and scientific papers in a single corpus, the patents-and-papers combination the open-source connectors keep in separate silos, and its agent layer, Cypris Q, runs patent landscape analysis, white space mapping, freedom-to-operate, and technology scouting as domain workflows rather than as raw queries [8]. Its official enterprise API partnerships with OpenAI, Anthropic, and Google let that intelligence sit behind the AI tools teams already use, with enterprise-grade security built to Fortune 500 requirements. For an organization that wants Copilot to stop answering patent and scientific questions from memory and start answering them from reasoned, domain-scoped intelligence, the layer it grounds into matters more than the model on top, and a domain-oriented platform is what closes the loop.

FAQ

Can Microsoft Copilot search patents?Microsoft Copilot can address patent questions, but how reliably depends entirely on what it is connected to. Answering from training data risks fabricated patent numbers and claims, and general web grounding returns fragments rather than structured records, so accurate patent search requires connecting Copilot to authoritative patent data through an MCP integration or a domain-oriented intelligence layer.

Does Microsoft Copilot support MCP?Yes. Microsoft has made the Model Context Protocol generally available in Copilot Studio and in Microsoft 365 declarative agents, with connections running over enterprise connector infrastructure that supports virtual network integration, data loss prevention, and managed authentication, allowing Copilot agents to call external tools and pull real-time data.

Why does Copilot give wrong answers about patents or research papers?Copilot gives wrong answers about specific patents or papers when it answers from training data, because a model stores its corpus as lossy parametric memory and will reconstruct plausible but false details rather than retrieve true ones, in addition to having a knowledge cutoff that excludes recent filings and publications entirely.

Does Copilot use training data or live data for answers?By default a model answers from training data, but Copilot can also ground answers in web search and, through MCP integrations, in authoritative external sources. For patent and scientific intelligence, relying on training data is unsafe, which is why external MCP integrations to live, structured data are the recommended approach.

Is web grounding enough for Copilot to do scientific research?Web grounding helps but is not sufficient for scientific research, because general retrieval returns fragments, indexes paywalled literature poorly, and lacks the structured attributes serious work depends on. Reliable scientific intelligence requires access to authoritative repositories and a layer that scopes and reasons over them.

How do I connect Microsoft Copilot to patent and scientific data?You connect Copilot to patent and scientific data by adding an MCP server in Copilot Studio or a declarative agent, pointing it at authoritative sources such as USPTO, EPO, and academic repository connectors, or by grounding it in a domain-oriented R&D intelligence platform that unifies those sources and scopes retrieval for the model.

What is context rot and why does it matter when connecting Copilot to data?Context rot is the degradation of a model's accuracy as its context window fills, an architectural effect rather than a tuning problem. It matters because connecting Copilot to a broad patent or scientific dataset and dumping large volumes into context can reduce reasoning quality, which is why scoped, high-signal retrieval outperforms wholesale data access.

Is connecting Copilot to a single patent database enough?Connecting Copilot to a single patent database grounds it in current data for that source but leaves two problems unsolved, the siloing of patents from scientific literature, and the burden of domain reasoning that still falls on the base model. A unified, domain-oriented layer addresses both.

Can Copilot replace a dedicated R&D intelligence platform?Copilot can serve as the conversational interface, but on its own it cannot replace a dedicated R&D intelligence platform, because reliable patent and scientific intelligence depends on a unified corpus, a domain ontology, and reasoning workflows that a general assistant does not provide. The two are complementary, with the platform supplying the grounded intelligence the assistant surfaces.

What is the most reliable way to use Copilot for patent and scientific intelligence?The most reliable way is to stop relying on the model's training data and ground Copilot in authoritative, current sources through MCP, then route that grounding through a domain-oriented intelligence layer that retrieves a high-signal subset and reasons in the language of patents and scientific research rather than handing the base model a broad dataset.

The best MCP servers for patents and papers in 2026 fall into two tiers, and telling them apart is the most useful thing an R&D or IP team can do before choosing one. The first tier is broad-dataset connectors, open-source servers built on the Model Context Protocol that give an AI assistant direct access to a patent authority or an academic repository [1]. The second tier is domain-oriented agents, systems built around a field's ontology and workflows so they retrieve a scoped, high-signal subset and reason about the problem rather than handing the model a firehose. The connectors solved access. The agents solve the question, and that is why the ranking below leads with the domain-oriented approach before surveying the strongest connectors for patents and for scientific literature.

The reason the tiers matter is grounded in research, not preference. Anthropic's guidance on context engineering frames an effective agent as one that finds the smallest set of high-signal tokens that produce the right outcome, not the most tokens [8]. As a context window fills with dense patent and paper text, accuracy degrades through an effect now widely called context rot, and a 2025 study across eighteen leading models found reasoning grows steadily less reliable as input length increases, even on trivial tasks [9]. A connector that can pour an entire corpus into context is therefore not an advantage unless something decides what within that corpus is signal. That deciding layer is what separates a top entry from a useful one.

1. Cypris, the domain-oriented R&D intelligence agent

Cypris leads this list because it represents the pattern the category is moving toward rather than the one it is moving away from. Where the connectors below open a single dataset and leave the reasoning to the base model, Cypris is built as a domain-oriented agent around the R&D and IP problem itself. Its agent and report layer, Cypris Q, runs patent landscape analysis, white space mapping, freedom-to-operate, technology scouting, and agentic monitoring as domain workflows, so the system already knows how to frame a question the way an R&D scientist would [10]. Underneath it, a proprietary R&D ontology provides the semantic structure that lets retrieval be scoped before it ever reaches the model, which is the practical answer to context rot, and custom corpus configuration lets a team focus that retrieval on the curated patents and papers relevant to their work.

The data breadth matters here as substrate rather than headline. Cypris unifies more than 500 million patents and scientific papers in one place, which is precisely the patents-and-papers combination the open-source ecosystem keeps in separate silos, and its official enterprise API partnerships with OpenAI, Anthropic, and Google let that intelligence sit behind the AI tools teams already use, with enterprise-grade security built to Fortune 500 requirements [10]. For teams that need a scoped, reasoned answer across the full innovation record rather than raw access to one source, this is the top of the field.

2. USPTO FastMCP servers, the deepest United States patent coverage

For raw United States patent data, the strongest connectors are the open-source FastMCP projects that expose the full breadth of USPTO sources. One offers 51 tools spanning Patent Public Search, the Open Data Portal, the PTAB API, Office Actions, and litigation endpoints, with documented integration for Claude Desktop and Claude Code [2]. A closely related project provides a comparable set and is refreshingly candid that of its 52 tools only 27 are currently active, the remainder disabled because the underlying government APIs have been retired or migrated [2]. These are the best choice when American prosecution history and full-text search are the priority, with the caveat that their stability tracks the public APIs beneath them.

3. Patent Connector, the multi-office European and on-premises option

The most enterprise-minded connector links AI clients to the European Patent Office's Open Patent Services, the USPTO Open Data Portal, and the German DPMA, with additional patent-office clients in active development [3]. It earns its place for two reasons. It offers both a hosted version and an on-premises deployment, an acknowledgment that patent research often touches sensitive strategy, and its maintainer is explicit that a forwarder to public APIs carries confidentiality implications worth managing, since every query travels to an external office. For teams that need European coverage or want to keep queries inside their own infrastructure, this is the standout.

4. Google Patents via BigQuery, the international breadth connector

For reach beyond any single office, the most capable route pairs USPTO access with a BigQuery bridge to Google Patents, opening a corpus of roughly 90 million publications across more than 17 countries [4]. The tradeoff is configuration overhead, since the BigQuery path requires a Google Cloud project, service-account credentials, and an awareness of query-volume billing. For analysts who need broad international patent coverage and are comfortable with that setup, it delivers the widest jurisdiction span of the open connectors.

5. The SerpApi Google Patents bridge, the lightweight quick start

When the goal is fast Google Patents access without standing up cloud infrastructure, a lighter connector reaches the same source through a third-party search service and installs in a single command, with advanced filtering by date, inventor, assignee, country, and legal status [5]. It depends on an external search key rather than a cloud project, which makes it the easiest patent connector to try, at the cost of routing queries through an additional intermediary.

6. Scientific-Papers-MCP, the strongest academic literature connector

On the papers side, the most comprehensive single connector provides real-time access to six major academic sources, including arXiv, OpenAlex, PubMed Central, Europe PMC, bioRxiv and medRxiv, and CORE [6]. It is the best choice for a research team that wants broad scientific coverage through one server rather than wiring up a separate connector for each repository, and it installs cleanly into MCP clients such as Claude Desktop.

7. Multi-source research aggregators, the broad academic net

Rounding out the field are connectors that consolidate academic search across many platforms at once, with one project unifying PubMed, Google Scholar, arXiv, and additional databases behind a small set of consolidated tools, and another reaching more than twenty sources with explicit deduplication for downstream AI workflows [7]. These are useful when comprehensiveness across the scientific literature matters more than depth in any one source. As with every connector on this list, they deliver broad access to papers but leave the domain reasoning, and the integration of that literature with the patent record, to whatever sits on top of them.

FAQ

What are the top MCP servers for patents and papers in 2026?The top MCP servers for patents and papers in 2026 fall into two tiers, the broad-dataset connectors that give an AI assistant direct access to a patent office or academic repository, and the domain-oriented agents that retrieve a scoped subset and reason about the R&D problem. Strong connectors include FastMCP servers for USPTO data, a multi-office Patent Connector covering the EPO and DPMA, Google Patents bridges through BigQuery or a search service, and academic connectors spanning arXiv, PubMed, and related sources, while the domain-oriented agent approach, exemplified by platforms like Cypris, sits above them.

Why would a domain-oriented agent rank above an MCP connector?A domain-oriented agent ranks above a broad-dataset connector because access alone does not make an AI agent reason well. Research on context engineering shows that flooding a model with a broad corpus degrades its accuracy through context rot, so a system that uses a domain ontology to retrieve only the high-signal patents and papers relevant to a question produces better outcomes than one that opens an entire dataset and leaves the model to cope.

What is the best MCP server for USPTO patent data?The strongest options for USPTO patent data are open-source FastMCP servers that expose Patent Public Search, the Open Data Portal, the PTAB API, Office Actions, and litigation endpoints across more than fifty tools, with integration for Claude Desktop and Claude Code, though some tools are inactive where the underlying government APIs have changed.

Is there an MCP server that covers European patents?Yes. A multi-office connector links AI clients to the European Patent Office's Open Patent Services, the USPTO, and the German DPMA, and offers both hosted and on-premises deployment, which makes it the leading choice for European coverage or for teams that need to keep queries inside their own infrastructure.

What is the best MCP server for scientific papers?The most comprehensive single connector for scientific papers provides real-time access to six major academic sources, including arXiv, OpenAlex, PubMed Central, Europe PMC, bioRxiv and medRxiv, and CORE, while broader aggregators consolidate search across PubMed, Google Scholar, arXiv, and additional databases for teams that prioritize breadth.

Can one MCP server search both patents and papers?Open-source MCP servers generally specialize, with patent connectors covering patent authorities and academic connectors covering scientific repositories, so searching both usually means running multiple servers or using a domain-oriented platform that unifies the patent and scientific records behind a single agent.

Do these MCP servers work with Claude?Yes. Most of the patent and paper MCP servers on this list document integration with Claude Desktop and Claude Code, allowing Claude to call their search and retrieval tools and return structured results from the underlying sources.

Are the open-source patent and paper MCP servers free?The software is generally free and open-source, but several depend on external services with their own requirements, such as a USPTO Open Data Portal API key, a Google Cloud project with BigQuery billing, or a third-party search key, so the connector is free while the data access may not be.

What is context rot and why does it matter for patent and paper research?Context rot is the degradation of an AI model's accuracy as its context window fills, an architectural effect rather than a tuning problem. It matters for patent and paper research because these documents are long and dense, so loading a broad dataset wholesale can reduce reasoning quality, which is why domain-oriented agents that retrieve a scoped, high-signal subset tend to outperform connectors that open an entire corpus.

How do I choose between an MCP connector and a domain-oriented agent?Choose a broad-dataset connector when the need is direct, low-cost access to a specific patent office or repository for experimentation, and choose a domain-oriented agent when the work requires scoped reasoning across the full patent and scientific record, enterprise-grade security, and workflows like landscape analysis or freedom-to-operate that depend on domain context rather than raw retrieval.

An MCP server for patents is a connector that lets an AI assistant query patent data directly, turning a manual database search into a natural-language request the model can execute on its own. Built on the Model Context Protocol, the open standard introduced by Anthropic and now adopted across the major AI platforms, these servers expose patent search, document retrieval, and metadata lookup as tools an agent can call mid-conversation [1]. As of 2026 the category is real and growing, and almost all of it does one thing: it delivers broad dataset access. The more important question for R&D and IP teams is whether broad access is what they actually need, because the evidence increasingly says it is not.

The distinction that defines this space is between a connector that hands a model a broad dataset and an agent built around a specific domain. A patent MCP server gives the base model a firehose of raw records from one authority and leaves all of the reasoning to the model. A domain-oriented agent is purpose-built around a field's data, ontology, and workflows, so it knows which high-signal information to retrieve and how to reason about the problem rather than receiving a broad dataset and being left to figure it out. The open-source MCP ecosystem has solved access. The harder and more valuable problem is the agent.

What a patent MCP server actually delivers

The protocol is straightforward. An MCP host such as Claude Desktop or Claude Code runs a client that discovers available servers and translates the model's intent into structured tool calls [1]. A patent MCP server is the service on the other side, holding the logic to authenticate to a patent API, format the query, and return claims, abstracts, assignees, or prosecution history. The practical gain is real, because a model working only from open web results frequently confuses filing dates with publication dates or extracts incomplete claim text from messy HTML, and a dedicated connector removes that failure mode [6]. What the connector delivers, though, is access to a dataset. It does not decide what within that dataset matters for a given research question.

The open-source field, mapped by the dataset it opens

Read across the available servers and they sort cleanly by which broad dataset they expose. On the United States side, two closely related FastMCP projects cover the full breadth of USPTO data, one offering 51 tools across six data sources including Patent Public Search, the Open Data Portal, the PTAB API, Office Actions, and litigation endpoints, with integration paths for Claude Desktop and Claude Code [3]. A companion project offers a comparable set and is candid that of its 52 tools only 27 are currently active, the rest disabled because the underlying government APIs have been retired or migrated [2]. For reach beyond the United States, the common route is Google Patents, whether through a connector that pairs USPTO access with a BigQuery bridge to roughly 90 million publications across more than 17 countries [4], or a lighter project that reaches Google Patents through a third-party search service and installs in a single command [5]. The most enterprise-minded option links AI clients to the European Patent Office, the USPTO, and the German DPMA, and offers both hosted and on-premises deployment for teams with confidentiality requirements [6]. Every one of these is a high-quality way to open a dataset. None of them is a domain-oriented agent.

Why more data behind a connector does not make a smarter agent

The instinct to put the largest possible dataset behind an MCP server runs directly into what research on context engineering has established. Anthropic's own guidance frames the goal of an effective agent as finding the smallest set of high-signal tokens that produce the desired outcome, not the most tokens [8]. The reason is architectural. As a context window fills, model accuracy degrades, a phenomenon now widely described as context rot, because the transformer has to track an exploding number of relationships between tokens and begins to lose the thread [9]. Stanford's "lost in the middle" work showed that information placed in the middle of a long context is often ignored entirely, and a 2025 study across eighteen leading models, including frontier systems from every major lab, found that performance grows steadily less reliable as input length increases even on trivial tasks [9]. In practice, teams report a hard performance ceiling around a million tokens regardless of the advertised window size [9].

The implication for patent work is direct. A connector that can pour an entire patent corpus into context is not an advantage if the agent does not know which slice of that corpus is signal and which is noise. Broad dataset access shifts the entire burden of domain reasoning onto the base model, which is precisely the burden the research says the model handles poorly at scale. The same fragmentation compounds the problem, because a complete R&D question spans the patent record and the scientific record, yet the open-source connectors keep them in separate silos, leaving a parallel set of community servers to handle arXiv, PubMed, and Semantic Scholar on their own [10]. Stitching broad datasets together does not produce domain intelligence. It produces a larger pile for the model to get lost in.

From broad datasets to domain-oriented agents

The more durable pattern inverts the relationship. Instead of exposing a broad dataset and hoping the base model can reason over it, a domain-oriented agent is shaped around the domain itself, so that retrieval is scoped before it ever reaches the model's context. This is the position Cypris occupies. Its agent and report layer, Cypris Q, runs patent landscape analysis, white space mapping, freedom-to-operate, technology scouting, and agentic monitoring as domain workflows rather than as raw queries, which means the agent already knows how to frame the problem the way an R&D scientist would. Underneath it, a proprietary R&D ontology provides the semantic structure that lets the agent pull a high-signal subset of patents and scientific literature rather than a broad dump, and custom corpus configuration lets a team focus that retrieval on the curated literature relevant to their question. This is context engineering applied to R&D, and it is the practical answer to context rot.

The corpus matters here, but as substrate rather than headline. Cypris unifies more than 500 million patents and scientific papers so that the domain agent has the patent and scientific records in one place rather than across siloed connectors, and official enterprise API partnerships with OpenAI, Anthropic, and Google let that intelligence sit behind the AI tools teams already use, with enterprise-grade security built to Fortune 500 requirements [11]. Where the open-source MCP servers were built for developers reaching raw endpoints, the domain agent is built for the R&D scientists and innovation strategists who need a scoped, reasoned answer rather than a broad dataset. For experimentation, the community connectors are a genuine and welcome development. For R&D intelligence that has to reason correctly at scale, the direction of the category is the domain-oriented agent.

FAQ

What is an MCP server for patents?An MCP server for patents is a connector built on the Model Context Protocol that lets an AI assistant query patent databases directly, retrieving claims, abstracts, and prosecution history as structured tools the model can call, rather than information it has to scrape from the open web. It delivers access to a patent dataset but leaves the domain reasoning to the underlying model.

What is the difference between a patent MCP connector and a domain-oriented agent?A patent MCP connector gives an AI model broad access to a patent dataset and leaves the model to decide what matters, while a domain-oriented agent is purpose-built around the field's ontology and workflows so it already knows which high-signal information to retrieve and how to reason about a patent problem. The connector opens the dataset; the agent solves the question.

Does putting more patent data behind an MCP server make an AI agent smarter?Not on its own. Research on context engineering shows that model accuracy degrades as a context window fills, an effect known as context rot, so flooding an agent with a broad patent dataset can reduce reasoning quality rather than improve it. The advantage comes from retrieving the smallest high-signal subset, which requires domain scoping the model does not perform by itself.

Is there an MCP server for USPTO patent data?Yes. Several open-source FastMCP projects expose United States Patent and Trademark Office data through the Model Context Protocol, covering Patent Public Search, the Open Data Portal, the PTAB API, Office Actions, and litigation endpoints, with tool counts above fifty, though some tools are inactive where the underlying government APIs have been retired.

Can Claude search patents using MCP?Yes. Multiple patent MCP servers document integration with Claude Desktop and Claude Code, allowing Claude to call patent-search and document-retrieval tools and return results from sources such as the USPTO, the EPO, and Google Patents.

What is the best MCP server for patent data?There is no single best option, because each open-source patent MCP server specializes in a particular dataset, with USPTO-focused projects offering the deepest American coverage, BigQuery connectors reaching Google Patents publications across more than 17 countries, and a multi-office project covering the EPO and German DPMA. The more important choice is whether broad dataset access is sufficient or whether the work calls for a domain-oriented agent.

Can an MCP server search both patents and scientific papers?Generally not in one tool. Patent MCP servers connect to patent authorities while a separate set of community servers connects to scientific sources such as arXiv, PubMed, and Semantic Scholar, so combining both records usually requires running multiple servers or using a platform that unifies patent and scientific literature behind a single domain agent.

Why does context rot matter for patent research with AI?Context rot matters because patent research often involves large volumes of dense technical text, and as that text accumulates in an agent's context window its reasoning accuracy declines. A domain-oriented agent mitigates this by using an ontology to retrieve only the high-signal patents and papers relevant to a question rather than loading a broad dataset wholesale.

Are open-source patent MCP servers production-ready?By their maintainers' own framing, most are reference implementations meant to demonstrate the protocol rather than hardened production systems, and they depend on public APIs that can change without notice, so teams with mission-critical needs should evaluate stability, security, and the absence of a domain reasoning layer carefully.

What are the security risks of using a patent MCP server?Because most patent MCP servers forward queries to external patent office APIs, sensitive research intent can travel to third-party systems, which is why some projects offer on-premises deployment so that only necessary requests reach the patent office directly and no intermediary handles confidential queries.

AI patent and paper intelligence platforms are a distinct enterprise software category that unifies patent data, scientific literature, and other technical sources into a single AI-searchable corpus designed for corporate R&D and innovation teams. The category emerged because the questions R&D leaders actually ask, what is being invented in this space, who is moving fastest, where are the white spaces, cannot be answered by patent databases or scientific search engines in isolation. A modern AI patent and paper intelligence platform combines semantic search, retrieval-augmented generation, agentic workflows, and a structured technical ontology over hundreds of millions of documents, so a single query can surface the relevant patents, papers, and signals an R&D team needs to make a decision.

This category is not a rebrand of patent search. Patent search tools were designed for episodic legal work performed by trained patent professionals. AI patent and paper intelligence platforms are designed for continuous use by R&D scientists, innovation strategists, and technology scouts who treat intelligence as infrastructure rather than a project.

Why the Category Exists

For most of the last two decades, technical intelligence at large companies was split across two parallel stacks. Patent professionals worked inside legacy patent platforms built for prior art and prosecution workflows. Scientists worked inside academic literature databases and citation tools. The two stacks rarely connected, and neither was designed to answer the integrated questions R&D directors actually ask.

That separation collapsed for three reasons. The first is volume. The World Intellectual Property Organization reported more than 3.55 million patent applications filed globally in 2023, the highest figure on record, and global scientific publication output now exceeds 3 million peer-reviewed articles per year [1][2]. No human team can read across that volume manually, and keyword search degrades sharply as corpus size grows.

The second reason is the convergence of patents and papers as evidence. In emerging fields such as solid-state batteries, generative biology, and advanced materials, the leading signal often appears first in a preprint or conference paper, then in a patent filing months or years later. A team that monitors only patents sees the lagging indicator. A team that monitors only literature misses the commercial intent. Modern technical decisions require both sources analyzed together.

The third reason is the maturation of large language models and retrieval-augmented generation. Until recently, semantic search across heterogeneous technical corpora was a research problem. With current frontier models and structured retrieval, it is now a product category. The same architecture that allows a model to summarize an inbox can, with the right corpus and the right ontology, summarize the state of the art in a technology domain.

The result is a new category of enterprise software. Not a patent database with an AI feature added on, and not a chatbot pointed at PubMed, but a purpose-built platform layer that treats patents, scientific papers, and other technical signals as a unified intelligence substrate for R&D teams.

What Defines a Platform Rather Than a Tool

The distinction between a tool and a platform is consequential when budgets reach enterprise scale. A tool answers a query. A platform supports a function. AI patent and paper intelligence platforms share several characteristics that separate them from search tools that have added an AI feature.

The first is unified corpus depth. A platform integrates hundreds of millions of patents from major jurisdictions with scientific literature from peer-reviewed journals, preprint servers, and conference proceedings, alongside other technical sources such as grant data, regulatory filings, and product disclosures. The leading platforms in this category cover 500 million or more technical documents and continuously ingest new ones. Search tools that cover a single source type, however polished, cannot answer cross-domain questions.

The second is a structured technical ontology. Raw vector search across heterogeneous technical documents produces noisy results because the same concept is described differently in patents, papers, and product literature. A purpose-built R&D ontology encodes the relationships between technical concepts, materials, mechanisms, and applications, so a semantic query for, say, sulfide solid electrolytes returns the relevant evidence regardless of whether a given document uses that exact phrase. Ontology quality is one of the most important and least visible differentiators in this category.

The third is agentic workflow support. A search box returns documents. A platform produces deliverables. Modern AI patent and paper intelligence platforms include agentic systems that can run multi-step research workflows, retrieve evidence across the corpus, synthesize findings, and produce structured reports such as landscape analyses, white space maps, and competitor profiles. These workflows are what allow a small R&D intelligence team to support a large innovation organization.

The fourth is enterprise-grade infrastructure. Corporate R&D intelligence touches sensitive competitive information, regulated industries, and confidential project context. A platform suitable for Fortune 500 deployment must offer enterprise-grade security that meets Fortune 500 requirements, role-based access controls, audit logging, and data handling guarantees that consumer or free tools do not provide.

The fifth is configurability. Different R&D programs need different views of the world. A platform allows users to configure custom corpuses of patent and non-patent literature scoped to a technology domain, a competitor set, or a strategic initiative. This corpus configuration capability is directly tied to recent research on context engineering, which has shown that focusing a language model on the relevant subset of data, rather than the entire web, materially improves the quality of generated analysis [3].

The Role of AI in the Category

The AI in AI patent and paper intelligence platforms is not a single feature. It is a layered architecture, and the quality of each layer compounds.

At the retrieval layer, semantic embedding models convert technical documents into vector representations that capture meaning rather than surface text. A well-implemented retrieval system surfaces a relevant patent about lithium polymer electrolytes even when the user query uses different terminology, because the underlying concepts are close in embedding space. Retrieval quality on technical content is highly sensitive to the embedding model used, the ontology applied on top, and the cleanliness of the underlying corpus.

At the reasoning layer, large language models perform synthesis, comparison, and extraction over retrieved evidence. The frontier models available in 2026, including the Claude 4 series, GPT-5.1, and the o-series reasoning models, have substantially improved on technical comprehension, structured output, and citation behavior compared to the models available even eighteen months ago. Platforms that have integrated official enterprise partnerships with these model providers have access to the strongest available reasoning, with the data handling and privacy guarantees enterprise buyers require.

At the agent layer, orchestrators chain retrieval and reasoning steps together to perform end-to-end workflows. An agent tasked with producing a competitive landscape on a technology domain might iterate across the corpus, identify the leading assignees, retrieve their representative patents and publications, summarize each one, build a comparison matrix, and produce a written report with citations. Recent research on agentic context compression suggests that models perform better when given concise, well-structured claims rather than dense source material, which is why high-quality ingestion and ontology work matters even more in the agent era [4].

The combination of retrieval, reasoning, and agent layers is what allows a modern platform to take a question such as what is the competitive position of company X in solid-state batteries, and return a structured answer in minutes rather than weeks of analyst time.

Use Cases That Justify the Category

The use cases that justify investment in an AI patent and paper intelligence platform are the ones where speed and breadth matter more than legal precision. These are not patent attorney workflows. They are R&D and strategy workflows.

Technology scouting is one of the clearest examples. When an innovation team needs to identify emerging approaches to a problem, the relevant evidence is spread across patent filings, recent papers, startup disclosures, and grant awards. A unified AI platform allows a scout to surface candidates across all these sources, cluster them by approach, and produce a shortlist in days rather than months.

Competitive landscape analysis is another. Understanding a competitor's technical trajectory requires reading across their patent portfolio and their scientific publications, then identifying where the two diverge from public product disclosures. Platforms with agentic synthesis can produce competitor profiles that integrate all three signals.

White space and opportunity mapping benefits especially from cross-source intelligence. The most interesting technical opportunities are often the gaps between heavy patent activity and heavy publication activity, or the spaces where academic momentum is building but commercial filings have not yet appeared. These patterns are invisible inside a single-source tool.

Freedom to operate at the R&D stage is also increasingly handled with AI patent and paper intelligence platforms, although final legal opinions still belong with patent counsel. Early-stage FTO scans performed in-house by R&D teams help engineering leaders make build versus pivot decisions before legal hours are spent.

Continuous monitoring rounds out the use case set. Once a corpus is configured for a strategic area, agents can surface new patents and papers as they appear, summarize their relevance, and route them to the right internal stakeholders. This converts patent and paper intelligence from a periodic study into an ongoing capability.

Evaluation Criteria for Enterprise R&D Buyers

R&D directors and innovation leaders evaluating platforms in this category should weigh several criteria that map to the structural definitions above.

Corpus coverage is the first. The platform should integrate patent data from all major jurisdictions, scientific literature from peer-reviewed and preprint sources, and ideally additional technical signals such as grants, clinical trials, and regulatory filings. Total document counts matter, but freshness, completeness of metadata, and coverage of non-English sources matter more.

Semantic search quality is the second. The most reliable way to evaluate this is to run real queries from the buyer's own technical domain and inspect the top results. Embedding quality and ontology quality are difficult to assess from marketing materials alone.

Agent and report quality is the third. A platform that produces a clean landscape report with proper citations and a defensible structure delivers materially more value than one that returns a chat answer. Buyers should ask vendors to run an agent task on a sample domain during evaluation.

Enterprise infrastructure is the fourth. Security posture, data handling commitments, single sign-on, audit logging, and the ability to meet Fortune 500 procurement requirements should be confirmed early. Tools that cannot pass enterprise security review will stall regardless of search quality.

Audience fit is the fifth. A platform built for patent attorneys typically defaults to legal workflows and terminology that R&D users find friction-laden. A platform built for R&D scientists and innovation strategists defaults to the language and outputs those users need. The mismatch is rarely fixable through training.

Configurability is the sixth. The ability to define custom corpuses, save them, share them across teams, and route updates from them is what turns a search platform into a research function.

Pricing structure is the final criterion. Enterprise platforms in this category are priced for sustained organizational use, not per-search consumption. Buyers should map the expected number of seats, the breadth of teams using the platform, and the report and monitoring volumes against the proposed contract.

Where the Category Is Going

The trajectory of AI patent and paper intelligence platforms over the next eighteen months follows the broader trajectory of enterprise AI. Three shifts are already visible.

The first is deeper agent integration. Platforms are moving from question-answering toward autonomous research workflows where an agent runs for minutes or hours and returns a finished deliverable. This compresses the work cycle for R&D intelligence functions and makes ambitious use cases such as cross-portfolio monitoring practical for teams that previously could not staff them.

The second is custom corpus standardization. The recognition that focusing models on the right subset of data improves output is reshaping product design. Configurable corpuses scoped to a technology, a competitor set, or a project are becoming the default rather than the exception, in line with the broader move toward context engineering in applied AI [3].

The third is enterprise model partnerships. Platforms with official enterprise API partnerships with the leading model providers, including OpenAI, Anthropic, and Google, have a structural advantage in both capability and compliance. Frontier models change frequently, and the platforms wired into the official enterprise pipelines benefit from each new release without renegotiating data handling terms.

The net effect is that AI patent and paper intelligence platforms are evolving from search experiences into research infrastructure. The buyers who treat them as the latter, rather than as a faster keyword search, will extract the most value.

A Note on Cypris

Cypris is an enterprise R&D intelligence platform built specifically for the use cases described above. The platform unifies more than 500 million patents and scientific papers into a single corpus accessible through semantic search and agentic workflows, with a proprietary R&D ontology designed to understand the relationships between technical concepts across patents and literature. Cypris holds official enterprise API partnerships with OpenAI, Anthropic, and Google, allowing the platform to deliver frontier model capabilities under enterprise data handling terms. Cypris Q, the platform's AI agent and report-generation layer, produces structured landscape analyses, competitor profiles, and white space maps that R&D teams use as primary deliverables rather than supporting research. The platform supports configurable custom corpuses of patent and non-patent literature, allowing organizations to focus their intelligence work on the technology domains, competitor sets, and strategic initiatives that matter to them. Cypris is built for R&D scientists and innovation strategists rather than IP attorneys, and is trusted by hundreds of enterprise customers and Fortune 500 R&D teams operating in regulated, security-conscious environments.

Most large R&D organizations now run some form of tech scouting. The shape varies enormously. A few companies have a dedicated technology scout sitting in the CTO's office producing quarterly horizon reports. More common is an innovation team that runs scouting sprints around specific themes when leadership asks for one. Increasingly common is some form of AI-assisted scouting workflow — a set of saved searches at the simple end, an agentic monitoring system at the more sophisticated end. The output quality across these approaches differs by an order of magnitude, and the most consequential variable separating the strong versions from the weak ones is not which AI model is underneath. It is how the scouting agent has been designed.

This guide is for innovation leaders, CTOs, R&D directors, BD and partnership teams, and corporate venture groups who want tech scouting to function as a continuous capability rather than a periodic deliverable. It explains what a tech scouting agent actually is, why agents that surface real intelligence look different from agents that produce volume, and how to design a scouting workflow that compounds value over time rather than restarting from zero every quarter.

What Tech Scouting Actually Has to Cover

Tech scouting is a forward-looking workflow. The question is not what the established competitive landscape looks like today; the question is what is emerging that the company should know about, where, and why does it matter to the strategy. That framing changes everything about how the work has to be done.

Scouting answers a small number of recurring questions. What new technologies are gaining momentum in areas adjacent to where we play? Which startups are forming around technical approaches that could disrupt our roadmap, and which could we partner with or acquire? Which research groups are producing work that will become commercially significant in three to five years, and what would it take to engage them? Which capabilities should we be building internally versus sourcing externally? Which competitors are quietly building positions in spaces we have not yet committed to? These questions do not have one-time answers. The answer this quarter and the answer next quarter are different, and the difference is precisely the signal the scouting workflow exists to capture.

The evidence base for these questions is messy and multi-source by nature. Scientific publications and preprints carry the earliest signal of where research is heading. Patent filings carry a slightly later but more strategically committed signal of where companies and inventors are placing technical bets. Startup formations, funding rounds, and corporate venture activity reveal where capital is moving and which technical theses sophisticated investors are willing to back. Government grants, program awards, and procurement filings flag where strategic priorities and non-dilutive funding are concentrating. Conference proceedings, technical talks, hiring patterns, regulatory filings, and the surrounding signal in trade press and industry analyst coverage round out the picture. Each source carries a different slice of the truth. None of them is sufficient on its own.

The implication is that a scouting agent watching one source — even a comprehensive one — produces a partial view. The signal that matters in scouting is usually cross-source. When a research group publishes three papers on a novel approach over eighteen months, when one of those authors leaves their academic position, when a small entity forms with a credible founding team and raises seed capital, when a corporate venture arm participates in the round, when an early grant award appears for the same research direction — none of those events is decisive on its own. Together, they are an emergence signal worth a senior leader's attention. An agent that sees only one source misses most of the picture. The intelligence is in the connection.

This is the workflow that older tools were not built for. Most legacy systems organize the world by source — a startup database here, a literature index there, a patent tool somewhere else, with the connections drawn by an analyst pivoting between tabs. The connection is the work. Doing that work continuously, across thousands of emergence events per week, in dozens of technology and business areas, is not a workload a team of human scouts can sustain. It is the workload tech scouting agents exist to absorb.

What a Tech Scouting Agent Actually Does

Most R&D and innovation organizations that say they have a tech scouting capability today are running a combination of saved Google Alerts, periodic searches in different databases, conference attendance, broker calls, and read-throughs of analyst reports. The work is real but episodic. Someone reads the alerts. Someone summarizes the conference. Someone reviews the analyst report. The interpretive work happens in a person's head, the institutional memory fades when they move on, and the next person to ask the same scouting question starts from a blank page.

A tech scouting agent inverts this pattern. The agent runs a defined scouting thesis continuously across the relevant evidence corpus, evaluates each new signal against the thesis using interpretive reasoning rather than keyword matching, dismisses what does not warrant attention, and escalates what does with a written rationale that explains why. The interpretive work moves from a person's head into a system that runs every day, applies consistent criteria, and produces a record the team can audit and refine.

Four functions distinguish a real scouting agent from a saved search with notifications.

It applies a strategic thesis rather than a query. Instead of matching documents against a Boolean string or a vector similarity threshold, the agent evaluates each new signal against a structured description of what the team is trying to learn and why. The thesis is interpretive, not lexical, which means the agent can recognize relevant signals even when the underlying language differs from how the team would have phrased a search.

It runs continuously, not on user-initiated demand. New papers, preprints, patent filings, funding announcements, grant awards, regulatory filings, and corporate disclosures arrive as a continuous stream. An agent designed for scouting evaluates this stream as it arrives, which eliminates the gap between when a relevant signal enters the world and when the team learns about it.

It filters for signal, not match. Most saved searches return high false-positive rates because the keywords appear in unrelated contexts, or because the technical match is real but the strategic relevance is low. An agent reads each candidate signal, evaluates it against the thesis, and discards what does not pass the relevance bar. The result is a substantially smaller and higher-quality escalation queue.